Running local LLM models with llama.cpp

Build llama.cpp with CUDA support, run GGUF models through llama-server, and tune common flags for fitting larger or MoE models on an 8GB RTX 4060 laptop GPU.

Build llama.cpp with CUDA support, run GGUF models through llama-server, and tune common flags for fitting larger or MoE models on an 8GB RTX 4060 laptop GPU.

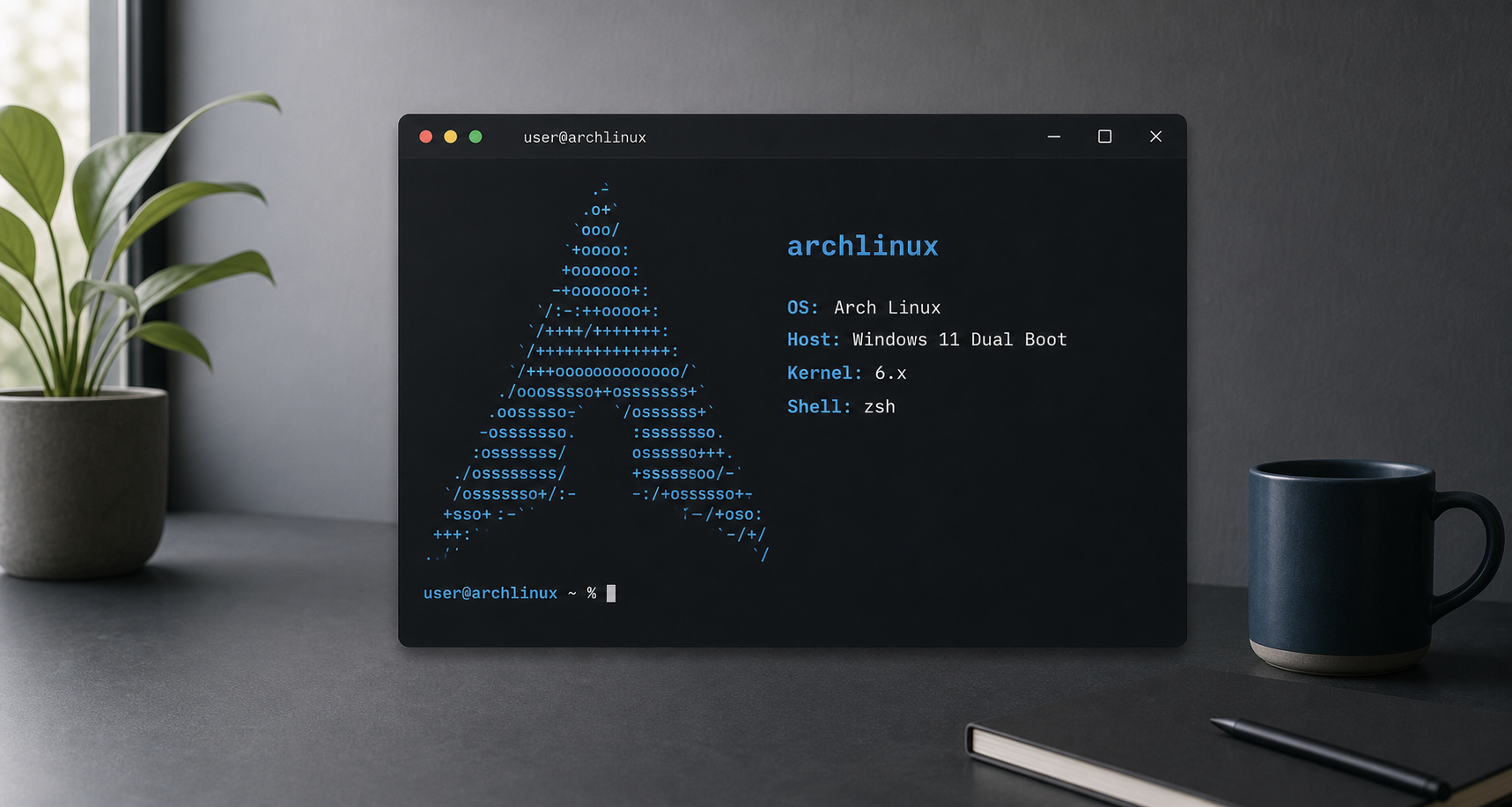

How I installed Arch Linux as a dual boot setup with Windows 11, from partitioning and pacstrap to GRUB, KDE Plasma, and laptop-specific NVIDIA brightness fixes.